Autonomous Pose Tracking with Compositional Reinforcement Learning Policies

Autonomous Pose Tracking with ANYmal

Autonomous Pose Tracking with ANYmalDescription

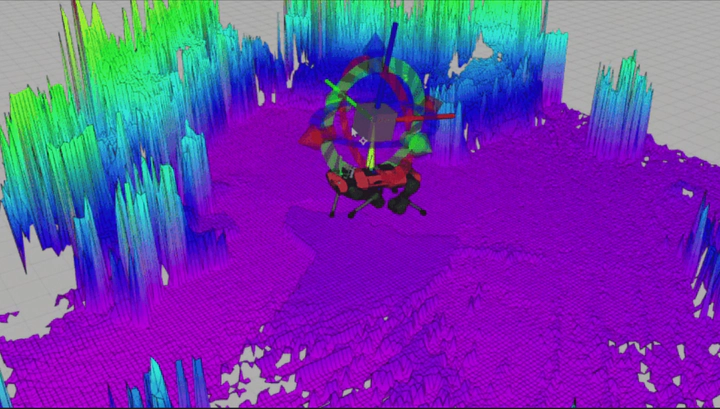

Reinforcement learning offers a promising methodology for developing robust robotic skills but typically results in independent control of specific behaviors. The common method is to urge robots to learn a target skill in an elaborately designed training setting. To further improve legged maneuverability, it is reasonable to combine such individual learned skills to attain novel and complex movements. Building on the previous success in legged locomotion with deep reinforcement learning techniques, we introduce a compositional control structure that learns pose tracking skills using state and target frame descriptions as input, locomotion, and tilting commands and weights as output actions. We also prove the generality of this structure by showing trivial adaptations when migrated to a perceptive controller. Additional elements of our solution that contribute towards integrated behaviors include the elaborated reward design process and the use of a handcrafted controller as a baseline to reveal novel locomotion patterns. Results are demonstrated both in simulation and on a real legged platform based on the operation modes we develop.